Using review instances to preview changes

Last Updated: November 18, 2025

If you need to do additional testing and verification outside of your local development environment, you can deploy changes from a branch or pull request. Review instances allow you to view changes to services or applications on the backend and frontend.

Reviewing visual or content changes to VA.gov

Review instances can be created manually by running a Jenkins job.

Automatic creation

Automatic creation of review instances on every push has been disabled, the CI jobs that handled this process have been removed. Moving forward, users must manually create Review Instances as-needed.

Manual creation

If you made changes to branches in both vets-website and vets-api, and you want to test the changes together, you can manually trigger a build.

Visit http://jenkins.vfs.va.gov/job/deploys/job/vets-review-instance-deploy/ and log in (Only available behind the VA Network [CAG/AVD, or GFE]).

Select "Build with Parameters."

Specify the branch names for

api_branchandweb_branch. These branches will be deployed together with the review instance.You can use

main(forweb_branch) ormaster(forapi_branch) if you’re not testing changes in that repo.

Monitor the job until the build is completed.

Access and usage

To access Review Instances, please visit “How Do I Connect to My Deployed Instance?”

Common tasks

Both vets-website and vets-api processes are managed via Docker Compose. The code is stored in the dsva user's home directory. You might need to perform the following common tasks in a SSH session on the review instances.

vets-website

To view build and access logs for the development web server, enter the following command (enter Ctrl-C to exit):

cd ~/vets-website; docker-compose -f docker-compose.review.yml logs -fThis will include both build logs and access logs to the development web server running.

To rebuild vets-website after modifying the code, enter the following command:

cd ~/vets-website; docker-compose -f docker-compose.review.yml restart vets-website

You will not be able to view the instance while the website is building. The website will return 502 errors until the build process finishes and the server restarts. To check progress or troubleshoot if you suspect the build failed, use the command above to view logs.

To troubleshoot, start a shell session in the build environment using the following command:

cd ~/vets-website; docker-compose -f docker-compose.review.yml exec vets-website bash

vets-api

To force a restart of Puma or Sidekiq, enter the following command:

cd ~/vets-api; docker-compose -f docker-compose.review.yml restart webOn review instances, Puma is running with

RAILS_ENV=development, which enables live-reloading of some files, but not all. This means that you will not be able to see some code changes until you force a restart. Thevets-apiservice in the compose file includes both Puma and Sidekiq.To view stdout of Puma or Sidekiq processes, enter the following command (enter Ctrl-C to exit):

cd ~/vets-api; docker-compose -f docker-compose.review.yml logs -f webDo not add

-fif you only want to view the files one time.In development mode, vets-api does not log to stdout. It logs to log/development.log like it would on a local installation.

To rebuild the container and start over, enter the following command:

cd ~/vets-api; docker-compose -f docker-compose.review.yml down -v; docker-compose -f docker-compose.review.yml up -dRunning this command results in downtime for the instance.

To open a Rails console, enter the following command:

cd ~/vets-api; docker-compose -f docker-compose.review.yml exec web bundle exec rails cTo get a bash shell in one of the containers, enter the following command:

cd ~/vets-api; docker-compose -f docker-compose.review.yml exec web bash

Cleanup

The review instance is deleted when the non-main branch that a review instance is related to is deleted or when the instance is older than 7 days.

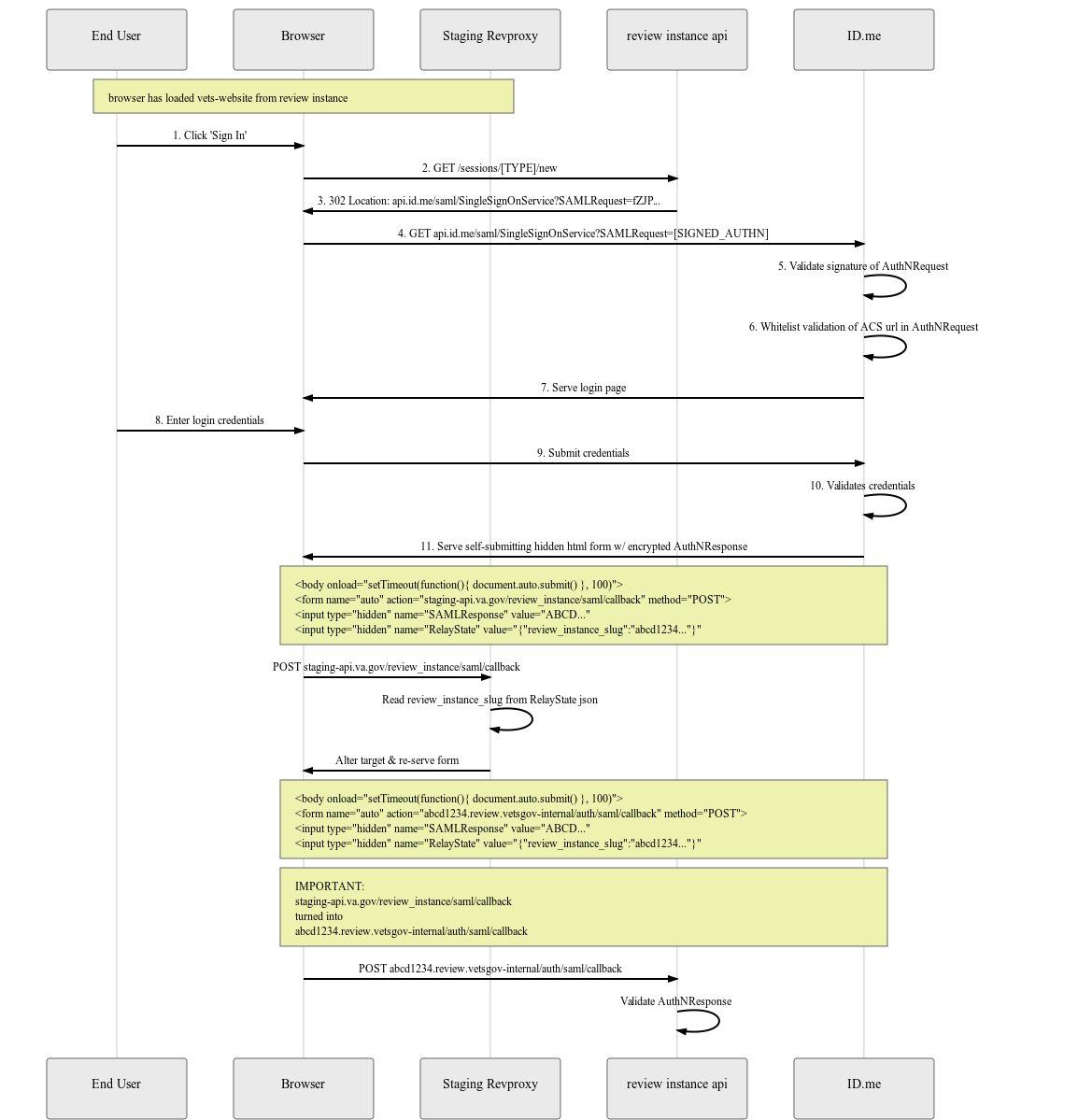

User authentication

The review instance requires a special nginx configuration that intercepts the callback to the staging-api.va.gov server, and forwards the authentication information to the appropriate review instance. The information is mapped by the RelayState parameter, which is provided to the review instance vets-api config with the REVIEW_INSTANCE_SLUG environment variable).

Implementation details of the authentication for Review Instances

Help and feedback

Get help from the Platform Support Team in Slack.

Submit a feature idea to the Platform.